The AI video generation landscape shifts rapidly as new platforms challenge established players. Higgsfield AI emerges as a unified ecosystem integrating multiple cutting-edge models, while Wan 2.5 introduces synchronized audio and advanced cinematic controls. These tools represent the next wave of AI-native filmmaking, offering creators unprecedented control over camera movement, lighting, and narrative flow.

Key Takeaways

- Higgsfield currently aggregates multiple video models (Sora 2, Veo 3.1, WAN, Kling, Minimax) under one interface.

- Wan 2.5 supports native audio generation with lip-sync and multi-modal inputs, with clip lengths up to 10 seconds.

- Access/pricing: Higgsfield has public paid tiers (from $9/mo), while Wan 2.5 is usable via multiple hosts/APIs rather than a strict waitlist.

- Camera control on Wan 2.5 includes natural-language moves like dolly/zoom/FPV and works via presets on hosts such as Higgsfield.

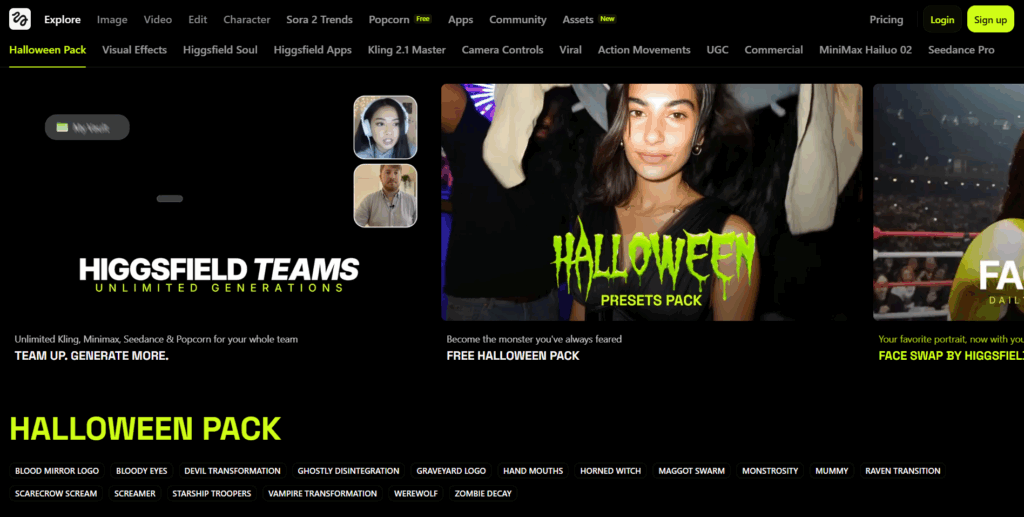

Higgsfield AI — Multi-Model Video Studio

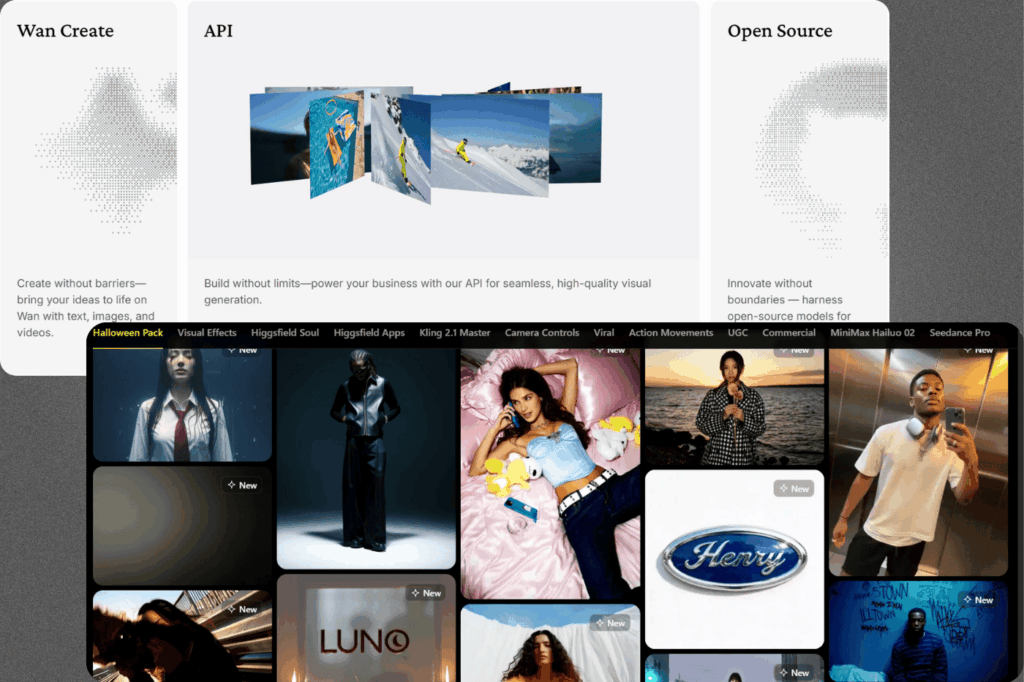

Image Source: higgsfield

Higgsfield is a hosted AI video platform that unifies Sora 2, Veo 3.1, WAN 2.5, Kling, and Minimax behind one interface, plus house tools (DOP camera controls, Upscale, Enhancer). It targets creators who want to test and mix models without juggling multiple sites or APIs.

Access & Pricing (2025)

- Public plans: Basic ($9), Pro ($29), Ultimate ($49) with credits/priority; optional 72-hour Unlimited pass that unlocks Veo 3.1, Sora 2 tiers, Upscale/Enhancer.

- Credit packs are also available for bursts.

Special Features

- Model switcher: run the same prompt across Sora 2, Veo 3.1, WAN 2.5, etc., from one workspace.

- Cinematic camera controls: preset moves like dolly, crane, FPV, rack focus.

- Audio-enabled models: Veo 3.1 and WAN 2.5 generations with native audio/lip-sync (host-dependent).

How It Compares (Quick Matrix)

| Capability | Higgsfield (host) | Sora 2 (via Higgsfield) | Veo 3.1 (via Higgsfield) | WAN 2.5 (via Higgsfield/API) |

|---|---|---|---|---|

| Typical clip length | Varies by model | up to ~10–12s | ~8–12s (host-settings) | up to ~10s |

| Native audio | Model-dependent | Supported on host | Supported on host | Yes (lip-sync) |

| Resolution (gen) | Up to 1080p (model-hosted) | 1080p | 1080p | up to 1080p |

| Access/API | Hosted plans | Hosted | Hosted | Hosted + third-party APIs |

Sources: durations/resolution & audio claims from Higgsfield model pages, WAN 2.5 docs/hosts.

Limitations & Caveats

- 4K: most generations are 1080p; 4K generally requires upscaling rather than native output—verify per model. Scenario

- Time & queues: jobs are asynchronous and queue-dependent across hosts; expect variable completion.

- Feature variance by model: camera moves and audio differ across engines (e.g., some moves are WAN-specific presets).

- API: Higgsfield is primarily a hosted studio; WAN 2.5 offers API via providers (e.g., KIE).

Why Pick Higgsfield?

- One subscription to trial multiple top models side-by-side, with extras like Unlimited Pass for heavy sprints. Higgsfield

- Built-in cinematic controls and utilities (Upscale/Enhancer) reduce hopping between tools.

Quick takeaway: If your workflow needs A/B testing across Sora 2, Veo 3.1, and WAN 2.5 with unified camera/audio options, Higgsfield is a pragmatic hub—just plan for 1080p outputs and host-dependent limits on length/audio.

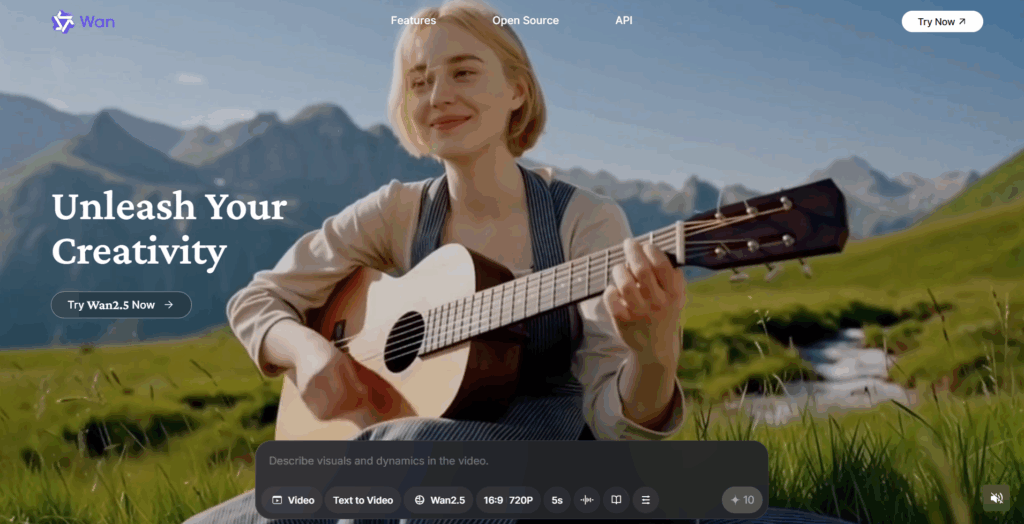

WAN 2.5 — Audio-Synchronized Video Generation

Image Source: WAN 2.5

WAN 2.5 is a text/image-to-video model that generates video and audio in a single pass—dialogue, ambience, music, and lip-sync—so you don’t need a separate audio workflow. Typical outputs are up to 10s at 24 fps and 480p/720p/1080p with multiple aspect ratios.

Access & Pricing (2025)

- API/Cloud hosts: Available via Alibaba Cloud Model Studio/DashScope (wan2.5-i2v/t2v preview) and third-party providers (e.g., KIE; also integrated by several creator tools).

- Indicative pricing (Alibaba Cloud, Intl/Singapore): 480p $0.05/s, 720p $0.10/s, 1080p $0.15/s; free quota for trials.

- No-code hosts: Some platforms expose WAN 2.5 with 5s/10s options and download-ready results.

Special Features

- Native audio + lip-sync: Voices/SFX/music aligned to mouth movement and scene timing.

- Cinematic control: Natural-language camera moves (dolly, orbit, FPV, rack focus) and lighting/time-of-day controls when hosted with camera-control UIs (e.g., Higgsfield’s guides/presets). Unlike older models that struggle with stability, new foundation models are adopting concept-level object tracking similar to Meta’s latest architecture, ensuring objects remain consistent across frames.

- Better prompt comprehension: Clearer mapping from descriptive prompts to motion, composition, and mood vs. earlier WAN versions.

Quick Compare (At A Glance)

| Capability | WAN 2.5 | Veo 3.1 (hosted) | Sora 2 (hosted) |

|---|---|---|---|

| Native audio/lip-sync | Yes (one-pass) | Host-dependent | Host-dependent |

| Typical duration | Up to ~10s | ~8–12s (varies by host) | ~10–12s (varies by host) |

| Resolutions (native gen) | 480p–1080p | Often 1080p | Often 1080p |

Limitations & Caveats

- Length & resolution caps: Most hosts cap WAN 2.5 at ≤10s and ≤1080p; “4K” claims are usually upscales, not native generation. Verify per provider.

- Host variance: Camera-control presets, audio options, and credit costs vary by platform/API; results and queue times depend on the host.

- Multi-speaker scenes: Lip-sync is strong on single speakers; complex mixes may require manual polish in post (tooling still evolving). (Inference based on host notes and specs above.)

Why Pick WAN 2.5

- All-in-one passes (picture + sound) for quick social spots, trailers, product clips.

- Flexible access: use no-code hosts to experiment, then scale via API (DashScope/KIE) when you need automation and volume.

Bottom line: Choose WAN 2.5 when speed + lip-sync matter; plan for 10s/1080p constraints and confirm your host’s camera presets and pricing before production.

Platform Comparison and Feature Matrix

Below is a side-by-side view of Higgsfield as a hosted studio versus three major models frequently used within it—WAN 2.5, Sora 2, and Veo 3.1—so you can compare audio, duration, resolution, controls, and access at a glance. Specs vary by host, so we cite representative host docs and vendor pages where limits are explicitly stated.

| Feature | Higgsfield (host studio) | WAN 2.5 | Sora 2 (via Higgsfield) | Veo 3.1 (via Higgsfield) |

|---|---|---|---|---|

| Model integration | Aggregates Sora 2, Veo 3.1, WAN, Kling, Minimax in one workspace. | Single model (text/image→video). | Model accessible within Higgsfield. | Model accessible within Higgsfield. |

| Audio generation | Host-level; depends on chosen model. | Native one-pass audio + lip-sync. | Host claims audio sync support. (Varies by host) | Audio supported on host. |

| Typical duration | Varies by model/preset in app. | Up to ~10s typical. | Host notes “support for longer durations”; common host presets are short (≈10–12s). | Host page lists 4/6/8s; external reporting suggests longer (up to ~1 min) is rolling out on some providers. |

| Resolution (native gen) | Up to 1080p on most hosted models. | 480p/720p/1080p. | Commonly 1080p on hosts. | 720p/1080p at 24 fps on host. |

| Camera controls | Built-in DOP/cinematic presets (dolly/orbit/FPV, focus, lighting) that apply to supported models. | Advanced camera presets & lighting on hosts that expose them. | Uses Higgsfield’s control layer where applicable. | Uses Higgsfield’s control layer where applicable. |

| Access | Public plans + optional 72-hour Unlimited Pass. | Available via APIs/hosts (e.g., Alibaba Cloud, other providers). | Accessed via Higgsfield (hosted). | Accessed via Higgsfield (hosted); broader rollout reported. |

| API availability | Hosted studio (no public API docs). | API via providers (e.g., Alibaba Cloud Model Studio). | Not publicly documented; typically hosted only. | Not publicly documented; typically hosted only (Higgsfield). |

Notes & sources:

- Higgsfield model lineup/blog and product pages confirm integrated models and host features (DOP controls, access tiers).

- WAN 2.5 specs (duration/resolution/audio) and API/pricing are documented by Scenario and Alibaba Cloud.

- Sora 2 and Veo 3.1 host pages describe availability and typical host constraints; TechRadar reports longer Veo 3.1 durations emerging on some services.

Roadmap Analysis and Development Timeline

Image Source: Wan Higgsfield

Cut through promises vs. reality for Higgsfield and WAN 2.5—what’s coming next, what actually works today, and where the gotchas live. Use these notes to set realistic specs, choose the right platform for your workflow, and avoid surprises around access, pricing, and policy limits.

Roadmap (Near Term)

- Higgsfield (hosted studio): Focused on multi-model access (Sora 2, Veo 3.1, WAN, etc.), workflow tools (DoP presets, Upscale/Enhancer), and short-form presets; current guidance centers on 1080p generations with an optional 72-hour Unlimited sprint pass.

- WAN 2.5 (model): Hosts/APIs emphasize one-pass video+audio with ≤10s clips at 480p/720p/1080p; incremental quality and control updates are rolling out across providers.

Claimed vs. Demonstrated

- Demos vs. typical use: Higgsfield’s showcase looks polished, but real output depends on the chosen engine and preset; e.g., Veo 3.1 commonly exposes 4/6/8-second options at 720p/1080p in the host UI. WAN 2.5 is broadly capped at 10s.

- Camera/audio controls: DoP-style camera moves and lighting exist at the host layer; WAN 2.5 natively returns synced voices/SFX/music in one pass.

Risks & Policy

- Rights & transparency: Public pages focus on features, not exhaustive training-data disclosures—confirm commercial terms per host/provider before client delivery.

- Moderation/queues: Host moderation may block edge prompts; jobs are asynchronous and queue-dependent.

Technical Limits You’ll Feel

- Length/resolution: Treat 1080p and ≤12s (Veo presets) / ≤10s (WAN) as today’s default; plan upscaling for “4K” asks.

- Access: Higgsfield offers public paid plans and trials; WAN 2.5 is accessible via hosts and API (e.g., Alibaba Cloud/KIE) with clear per-second pricing tiers.

Positioning (Who Should Use What)

- Higgsfield: Best for A/B testing multiple top models in one studio with built-in camera tools and utilities; scale bursts with the Unlimited pass.

- WAN 2.5: Ideal when speed + native lip-sync matter for short social spots; start on no-code hosts, then automate via API as you scale.

Bottom line: Ship with 1080p, ≤10–12s expectations, verify host presets before promising specs, and budget upscaling for higher-res deliverables.

Complementary AI Video Platforms

Several established platforms can supplement these emerging tools for comprehensive video production workflows. These alternatives offer different strengths that complement Higgsfield and Wan AI’s capabilities.

Image Source: HeyGen

HeyGen

HeyGen specializes in AI avatar creation and talking head videos with professional presentation quality. The platform excels at corporate communication and educational content where human-like presenters enhance engagement.

Write your script (or get some help with built-in ChatGPT), and watch an avatar read it flawlessly in one take. Need to change something? No reshoots necessary, just edit the text.

Image Source: InVideo AI

InVideo AI

InVideo AI focuses on template-driven video creation with extensive customization options for marketing content. The platform provides robust editing tools and stock media integration for polished promotional videos.

Instantly turn your text inputs into publish-worthy videos. Invideo Al video generator simplifies the process, generating the script and adding video clips, subtitles, background music, and transitions.

Image Source: DeepBrain

DeepBrain

DeepBrain delivers hyper-realistic AI humans for enterprise applications and customer service scenarios. The platform emphasizes natural conversation flows and multilingual support for global business communications.

Realistic AI avatars, natural text-to-speech, and powerful AI video editing capabilities all in one platform. Everything You Need to Create & Edit Great Educational Videos

Image Source: Elai

Elai

Elai combines AI video generation with learning management system integration for corporate training content. The platform streamlines educational video production with automated translations and interactive elements.

Build customized AI videos with a presenter in minutes without using a camera, studio and a greenscreen.

Conclusion

Higgsfield AI and Wan 2.5 represent significant advances in AI video generation capabilities. Both platforms show promise but require further development for professional production workflows. Early adopters should evaluate current limitations against specific use cases before committing to either platform.

Ready to create smarter, faster, and more cinematic content? Discover exclusive Softlist.io picks and deals on AI tools that elevate your workflow without replacing your creative voice. Explore our Top AI Video Editors guide to find studio-ready options for camera control, native audio, and seamless post-production.

FAQs

What Are Higgsfield And Wan AI?

Higgsfield and Wan AI are emerging AI video tools designed to enhance content creation through advanced generative technologies. These platforms focus on simplifying video production and providing innovative features tailored to meet the needs of creators and businesses alike.

How Do These AI Video Tools Work?

These tools leverage machine learning algorithms to analyze user input and generate video content, offering features like automated editing, scene generation, and voice synthesis. Their intuitive interfaces allow users to create professional-quality videos with minimal effort.

What Makes Higgsfield Unique?

Higgsfield stands out with its focus on collaborative video production, enabling teams to work together in real-time. Its AI-driven features streamline the editing process, making it ideal for agencies and content creators looking to enhance workflow efficiency.

What Are The Key Features Of Wan AI?

Wan AI offers unique features such as customizable templates, advanced animation options, and integration with various media libraries. These capabilities allow users to create engaging videos that align with their branding and messaging needs.

How Do These Tools Compare To Traditional Video Editing Software?

Unlike traditional video editing software, Higgsfield and Wan AI simplify the creation process by automating many tasks that typically require manual input. This allows users to produce high-quality videos faster and with less technical expertise.

Who Can Benefit From Using Higgsfield And Wan AI?

Both tools cater to a wide range of users, including solo creators, marketing teams, and small to medium-sized businesses. Their user-friendly interfaces and powerful features make them suitable for anyone looking to enhance their video content without extensive editing skills.

Are There Any Costs Associated With These Tools?

Pricing for Higgsfield and Wan AI varies based on the features and subscription plans offered. It’s essential to review their pricing structures to find a plan that fits your budget and requirements.

What Is The Future Of AI Video Tools Like Higgsfield And Wan AI?

The future of AI video tools looks promising, with continuous advancements in technology expected to enhance functionality and user experience. As these tools evolve, they will likely offer even more sophisticated features, making video production accessible to an increasingly broader audience.